Meta's display chief names 10 features for a perfect VR headset

There are still many hurdles to overcome before virtual reality reaches its technical potential. The head of Meta's display division lists the challenges.

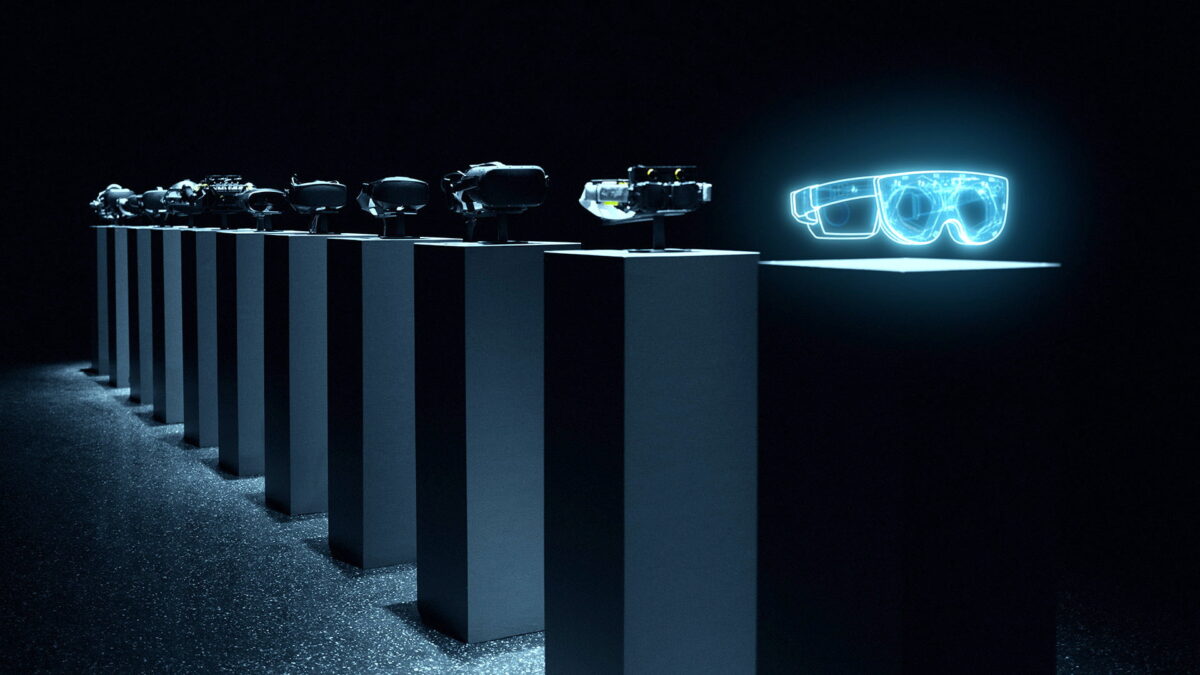

Douglas Lanman has worked at Meta for eight years and heads the department that develops display systems. In June, his team unveiled new VR glasses prototypes. The prototypes address different technical challenges such as resolution, brightness, and form factor. The researchers' goal is a VR display capable of visually reproducing physical reality while fitting into a compact headset.

At this year's Siggraph conference, Lanman gave a presentation on the top ten hurdles still to be overcome in developing a near-perfect VR headset. What follows is a list of those ten challenges, including a brief explanation. You can find the video of Lanman's talk at the end of the article.

The ten challenges

Content

Higher resolution

Current VR headsets don't come close to human vision in terms of resolution. For virtual worlds to look as real and sharp as if they were physical and for texts to be read well even at medium distances, the resolution of VR displays must increase significantly.

Meta states 8K per eye and a pixel density of 60 PPD as a preliminary target. For comparison: The Meta Quest 2 (review) only achieves 2K per eye and 20 PPD.

The higher the resolution, the sharper the image. Oculus Rift, Meta Quest 2, and the Butterscotch prototype in comparison. | Image: Meta

With the Butterscotch prototype, Meta developed an experimental headset that allows researchers to experience "retina resolution" and assess its immersive effect. The development and production of high-resolution displays is not the biggest problem. Rather, the question is housing the computing power to fuel such high-resolution displays. Foveated rendering and cloud streaming could help, but they present major technical challenges in themselves.

Wider field of view

Much also needs to be done in terms of field of view, Lanman finds. The human horizontal field of view is about 200 degrees wide. Commercially available VR headsets generally achieve a horizontal field of view of 100 degrees. But, there is still room for improvement in the vertical field of view as well.

A wider field of view poses great challenges for lens technology, which manifests itself in image distortions at the edges of the field of view. Here, too, the question of computing power arises. The wider the field of view, the more pixels the VR headset has to display. This results in higher power requirements and more waste heat.

Ergonomics

VR headsets are still heavy and bulky. Meta Quest 2, for example, weighs over a pound and protrudes almost 3 inches from the face. VR headsets ideally need to be comfortable to wear for longer periods of time and be far narrower and lighter in design.

The image shows the Holocake-1 prototype, which comes in the form of sunglasses. Shown transparently is an Oculus Rift from 2016. | Image: Meta

Pancake lenses and holographic lenses could help. Meta's fully functional Holocake 2 prototype shows the direction the form factor could take. The problem: Holocake 2 uses custom-built lasers as a light source, which are not yet developed to the point of feasible mass-producible.

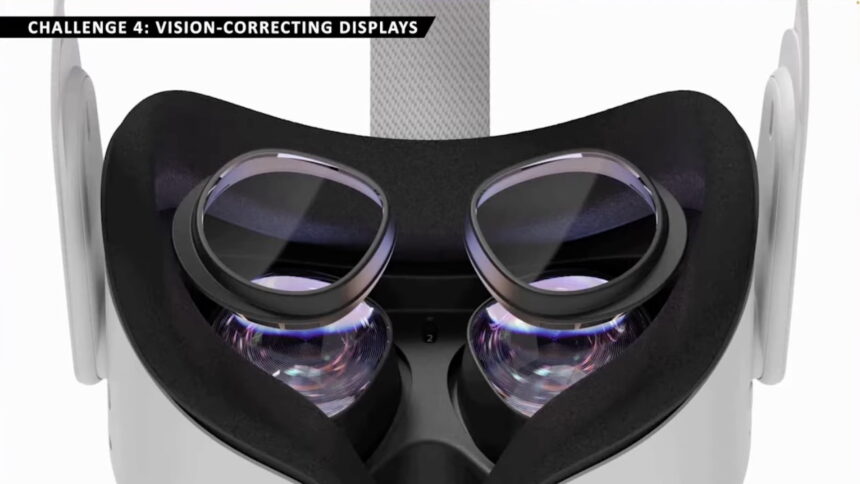

Display with vision correction

The perfect VR headset should be able to detect and compensate for vision deficiencies in users so that conventional glasses or contact lenses are not needed to see well in virtual reality. Will prescription frames fit under the VR headset without scratching the lenses or pushing down on the wearer's face? Consumers should not have to deal with such questions in the future.

The problem could be solved with special attachments or, even better, with a lens that can be adjusted to the user's own visual acuity.

Lens attachments with vision correction already exist. But they are not an ideal solution. | Image: Meta

Variable focus

The human eye cannot focus naturally in VR environments, which is particularly annoying at close range and can lead to eye fatigue and headaches after a longer period of time. This phenomenon is referred to in technical jargon as vergence-accommodation conflict.

To solve this problem, Meta's researchers developed a display that supports "progressive vision." The display simulates different focal planes as well as blurring and helps the eye to see the virtual world as if it originated in nature. Meta's varifocal prototypes are called "Half-Dome."

Eye tracking for all

Eye tracking is a key technology in virtual reality. It is the foundation of many other important VR technologies such as progressive vision (see item 5), foveated rendering, and distortion correction (see item 7). It also enables eye contact in social experiences and new forms of interaction.

The shape of the pupil differs from person to person. This is a challenge for eye-tracking systems. | Image: Meta

The problem with eye tracking is that it doesn't work equally well for all people and occasionally has dropouts. A reliable solution with broad demographic coverage is needed. Otherwise, the technology frustrates rather than helps and is rejected by consumers.

Distortion correction

Lenses inherently introduce image distortion that must be corrected by software. The slightest movement of the pupil causes fine but noticable distortions. They impair visual realism, especially in combination with other technologies such as progressive displays.

To accelerate the development of correction algorithms, Meta's researchers developed a distortion simulator. It can be used to test different lenses, resolutions, and field of view without having to build test headsets and special lenses.

High Dynamic Range (HDR)

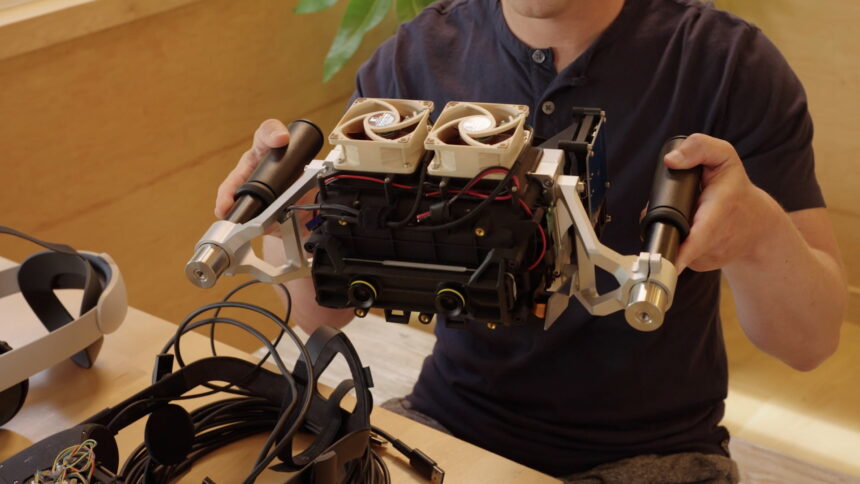

Physical objects and environments are far more luminous than VR displays, even indoors with artificial lighting. Meta built Starburst, a VR prototype capable of displaying up to 20,000 nits. For comparison, a good HDR TV comes up with several thousands of nits while Meta Quest 2 offers just 100 nits.

Mark Zuckerberg holds the Starburst prototype. | Image: Meta

Starburst can realistically simulate lighting conditions in closed rooms and night environments. The current prototype is so heavy that it hangs from the ceiling - and is extremely energy-hungry.

According to Meta, HDR contributes more to visual realism than, say, resolution and variable focus, but is the furthest from practical implementation.

Visual realism

The perfect VR headset must be translucent in both directions. VR users should be able to see the environment as much as the environment can see the VR users. This both for reasons of user comfort and of social acceptance.

Headset sensors record the environment and display it as a video image in virtual reality. This technology, called passthrough, already exists in commercially available VR headsets, if in rather poor quality. Meta Quest 2, for example, offers a passthrough mode in grainy black and white. Meta Quest Pro is supposed to improve this display mode noticeably with a higher resolution and color.

On the left is an Oculus Rift that blocks the view of the VR user's eyes. On the right is an early reverse-passthrough prototype. | Image: Meta

However, this does not yet achieve a perfect reconstruction of the physical environment. One of the problems yet to be solved is that the passthrough technology captures a perspective of the world that is spatially displaced from the eyes, which can be irritating during prolonged use. To this end, Meta is researching AI-assisted gaze synthesis that generates perspective-correct viewpoints in real-time and with high visual fidelity.

In the reverse case, which Meta calls "Reverse Passthrough," outsiders see the eyes and faces of VR users and can thus make eye contact or read facial expressions. This could be made possible by inward-facing sensors and an external (light field) display. This technology is still far from being ready for the market, as Metas research shows.

Facial reconstruction

Co-presence and metaverse telephony are Meta's ultimate goals. The company wants people to one day meet in virtual spaces and feel as if they are present in the same room. To this end, Meta is researching photorealistic codec avatars, but they are still very costly to produce and compute.

A first step in this direction is VR headsets that can read the facial expressions of VR users in real-time and transfer them to virtual reality. Quest Pro will be Meta's first headset to offer face-tracking.

Quest Pro can recognize facial expressions and transfer them to VR. | Bild: Meta

Douglas Lanman's Siggraph talk

Note: Links to online stores in articles can be so-called affiliate links. If you buy through this link, MIXED receives a commission from the provider. For you the price does not change.