Next-gen VR: Meta shows its latest headset protoypes

Meta gives insights into its research labs again and shows in which direction VR displays could develop in the upcoming years.

Last fall, Mark Zuckerberg realigned his company: Facebook became Meta, with the goal of laying the foundations of the Metaverse. Zuckerberg showed a vision of the future where people meet, play and work in virtual worlds.

Today's VR technology doesn't live up to that sci-fi vision. The core of a VR headset, the display, has barely evolved in the last decade and is still quite primitive: two relatively low-resolution smartphone screens connected behind a lens to fool the eye into seeing a 3D world.

To unlock the full visual potential of Virtual Reality, more is needed: a new kind of VR display that matches the power of human vision.

Content

VR displays: beyond conventional screens

Meta has been working on new kinds of VR displays since 2015, and now it's sharing the deepest look yet at its display research: from early prototypes to next-generation VR displays to still-unrealized futuristic concepts.

The research shows the direction Virtual Reality could take in the upcoming years, and that the display technology involved goes far beyond that of traditional 2D screens.

"One of the reasons why this is such an exciting space to work in is that this is genuinely new technology," said Meta CEO Mark Zuckerberg. "We're not just refining the types of screens that we've had on phones or TVs or computer monitors for decades and that already exist. We have to explore new ground in how physical systems can work together and how our visual system perceives the world."

The visual Turing test: four challenges

The goal is to develop a VR display that passes the visual Turing test and fits into a VR headset that is both slim and lightweight.

"In 1950, Alan Turing conceived the Turing Test in 1950 to evaluate whether a computer could pass as a human. The visual Turing test, which is a phrase that we've adopted, along with a number of other academic researchers, is a way to evaluate whether what's displayed in VR can be distinguished from the real world," explains Meta's chief scientist Michael Abrash. Currently, he said, no VR technology can pass this test.

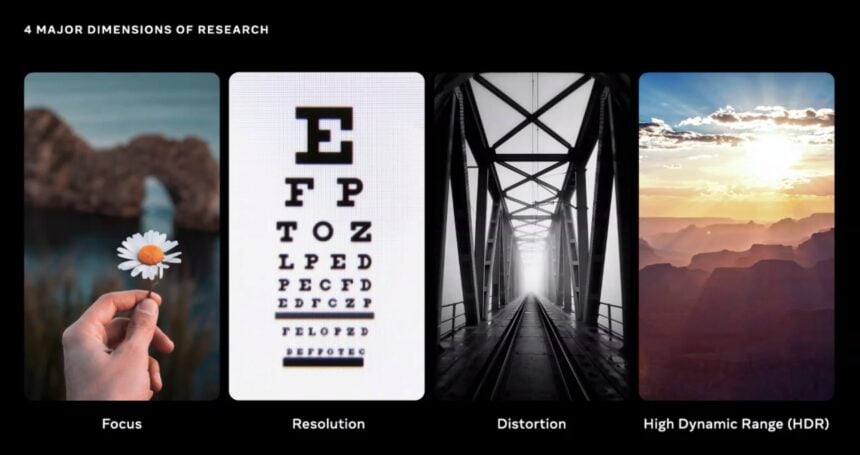

Pictorial representation of the four major VR display challenges. | Image: Meta

The Display Systems Research (DSR) team, which specializes in display development, has defined four characteristics that VR displays must meet in order to pass the visual Turing test. They should

- enable natural focusing of virtual objects (see Vergence-Accommodation Conflict)

- achieve a resolution equivalent to retinal vision

- compensate for optical distortions caused by lenses, and support

- support HDR, i.e., cover a much wider color and contrast spectrum than today's VR displays do

Why is visual realism important?

According to Mark Zuckerberg, a VR display that meets all these conditions and is adapted to human vision opens up new possibilities: on the one hand, it enhances social presence, i.e. the feeling of other people being present in the same virtual space; on the other hand, it allows to "unlock a whole new generation of visual experiences" that provides new possibilities for expression and thus has great cultural implications.

"Instead of just looking at them on a screen, you're going to feel like you're there, experiencing things that you'd otherwise never get to experience. And that feeling, the richness of that experience, that's why this sense of realism matters," Zuckerberg said.

Some technical challenges mentioned before, he said, have already been solved, while others still have a lot of work to do. But even away from display technology, many breakthroughs are still needed.

"Building these devices, it's an integrated effort that includes not just displays, but also state-of-the-art work on software and silicon and sensors and other hardware that need to work together seamlessly," Zuckerberg said.

The current research insight, he said, is only about the "last link in the chain": display systems. The DSR team is presenting a series of prototypes, some familiar and some new, that represent attempts to overcome the above challenges.

Meta is not saying exactly when these display technologies will find their way into commercial headsets. Zuckerberg cites "coming years" as a rough time frame.

Half-Dome: Prototype with varifocal display

Meta already showed the Half-Dome prototypes in 2018 and 2019. The goal of this research is to develop a varifocal display system that can be installed in commercially available VR headsets. Varifocal means that the wearers can focus on virtual objects as if they were part of the physical environment.

This is not a given, as VR headsets to date have a single, fixed focal plane, which can hinder human vision. Possible consequences include blurred vision, eye pain and even nausea. The so-called vergence-accommodation conflict prevents longer periods of dwelling in Virtual Reality, or at least makes it strenuous for the eyes. Accordingly, a solution to this problem is urgent.

All half-dome prototypes so far. | Image: Meta

Meta's first varifocal prototype was built in 2015 and very bulky. In 2017, the team developed Half-Dome Zero, a device with the form factor of an Oculus Rift that works with PC VR games. With this headset, Meta conducted an internal study that proved the immense perks of a varifocal display system: participants preferred artificial focus because it led to a much more comfortable and sharper visual experience, plus it made it easier to read text and identify small objects.

Two frog images in VR, the one in the back is out of focus. Such effects are not yet possible with today's VR headsets. | Image: Meta

The team has made an important breakthrough with Half-Dome 3 (2019): The latest prototype relies on so-called liquid crystal lenses, which eliminate the moving parts of earlier prototypes and adjust the plane of focus through electrical states. Another perk of this technology is the much narrower form factor it allows. Unfortunately, Meta did not show any recent half-dome prototypes. The last known device is Half-Dome 3.

Butterscotch: VR prototype with “retina resolution”

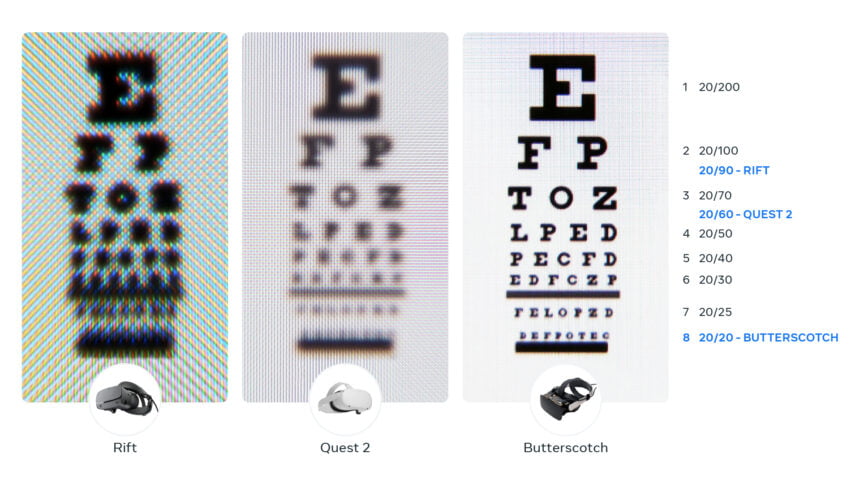

Another significant condition for visual realism is resolution. Meta estimates that VR headsets need to achieve 8K resolution per eye and a pixel density of 60 PPD to trick human vision and create a deceptively real image of reality. By comparison, today's VR headsets like the Meta Quest 2 are in the range of a 2K resolution and 20 PPD.

The Butterscotch prototype with partially open housing. | Image: Meta

In addition to the number of pixels, their quality must also be improved. "Today's VR headsets have substantially lower color range, brightness, and contrast than laptops, TVs, or mobile phones," explains Michael Abrash. "So VR can't really get to that level of fine detail and accurate representation that we've become accustomed to with our 2D displays."

Meta has developed several retina prototypes. Butterscotch is the latest in this class and the first Retina prototype Meta has shown to the public. It offers a pixel density of 55 PPD, according to Abrash, which is two and a half times the resolution of the Meta Quest 2. To achieve this resolution, the team developed a new hybrid lens and reduced the usual field of view by half.

Comparison of the visual acuity of different VR headsets. | Image: Meta

That's one of the reasons the device isn't ready for production yet. "It's heavy, it's bulky, but it does a great job of showing how much of a difference higher resolution makes for the VR experience," Abrash said. According to Abrash, it can even show details on the leaves of a tree.

Countering optical distortion: a simulator allows for rapid prototyping

Another obstacle to passing the visual Turing test is the distortion caused by the optical refraction of light. In Meta Quest 2, this is corrected by software.

"It's a pretty good approximation for now, but it isn't exactly right a lot of the time because the distortion of a virtual image changes as your eye moves around to look in different directions," Abrash explains. Perfect distortion correction would be dynamic, adapting to eye movements in real time.

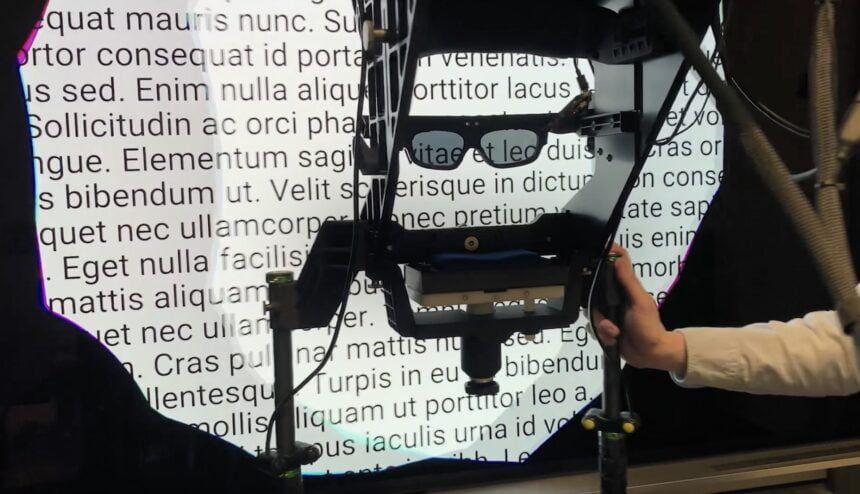

This device allows rapid prototyping of lenses and rectification algorithms. | Image: Meta

The team made a methodological breakthrough in this area, developing a device that allows distortion corrections to be simulated and evaluated within minutes instead of months, as was previously the case.

"What the team did was they built a distortion simulator that uses virtual objects and eye tracking to replicate the distortion you would see for a headset for a given optics design, and display it using 3D TV technology," Abrash said.

"This allows the team to study different optical designs and distortion correction algorithms without needing to build an actual headset. With this unique rapid prototyping capability, the team has been able to explore dynamic distortion correction in literally minutes rather than months."

This research will be used to develop new lenses and correction algorithms.

Starburst: VR prototype with HDR screen

The final major challenge is to develop a VR display that is capable of HDR. Zuckerberg places special emphasis on this task, calling HDR capability "arguably the most important dimension" among those mentioned so far - at least when it comes to visual realism.

"Basically, it's the overall brightness and contrast of a display, because our experience is that when lights are bright, you see colors pop and shadows are dark, and then that's when the scenes really start to feel alive," Zuckerberg said. "But the issue today is that the vividness of screens that we have now compared to what your eye sees in the physical world is off by an order of magnitude or more."

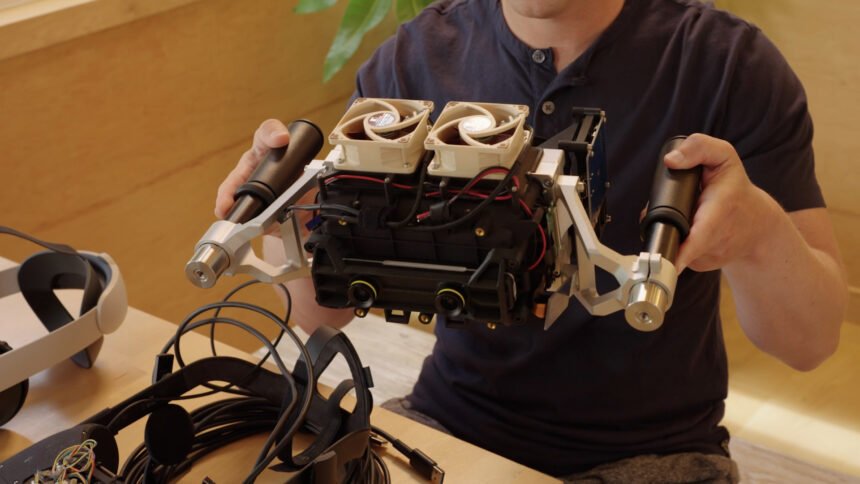

Zuckerberg holds the giant Starburst prototype by two handles. It supports 20,000 nits. | Image: Meta

The luminosity of a screen is measured in nits. Modern HDR TVs achieve several thousand nits, but the Quest 2 only reaches 100 nits.

To test the effect of an HDR headset, the DSR team combined an LC display with a powerful lamp. The result is a VR prototype called Starburst that reaches 20,000 nits. This level of brightness can accurately simulate indoor lighting conditions or nighttime environments.

The drawbacks of the first HDR prototype are obvious: The device is corded and so bulky and heavy that it must be held by two handles.

"It's, to be clear, wildly impractical in this first generation for anything that you'd actually ship in a product. But we're using it to test and for further studies so we can get a sense of what the experience feels like," Zuckerberg said.

Holocake 2: VR prototype with ultra-compact form factor

Advanced display systems aren't everything: They also need to fit into a VR headset that's as slim, lightweight and affordable as possible.

Meta is showing off another VR prototype that demonstrates advances in form factor: Holocake 2, Meta's thinnest and lightest VR goggles to date.

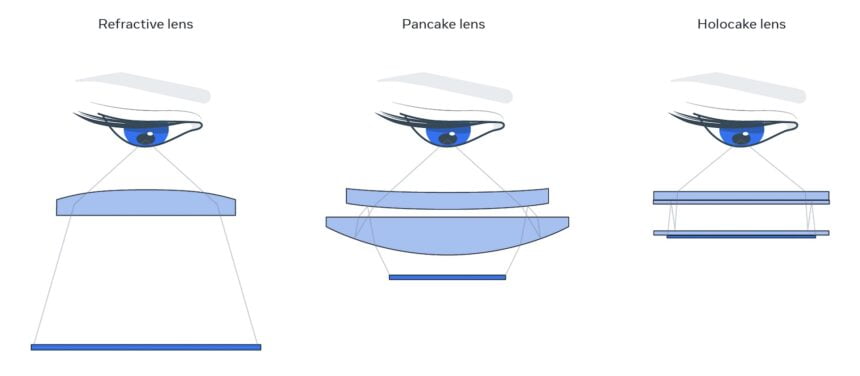

The name derives from the optics used to create an ultra-compact design: a previously unseen combination of holographic lens and pancake lens.

Mark Zuckerberg with Holocake 2, which has a light shield attached. | Image: Meta

The holographic lens comes from research that Meta presented two years ago. The holographic lens is exceptionally thin compared to conventional lenses.

By using pancake lenses, Meta was also able to significantly reduce the distance between the display and the lenses. Pancake lenses are closer to market than holographic lenses and will be incorporated into Meta's upcoming Cambria VR headset (info).

Holocake 2 is a full-featured PC VR headset and rivals Meta Quest 2 in terms of field of view, resolution, and eyebox - with a much thinner profile. However, the VR headset is also wired and, unlike the Quest 2, does not have a computing unit and cooler integrated. Otherwise, it would be thicker.

Comparison between three different lens types.Classic VR optics with a large distance between the display and the lens (left), pancake optics with a greatly reduced distance (center) and holocake optics, which also reduces the thickness of the lens. | Image: Meta

Another special feature of Holocake 2 is the light source: for reasons of brightness and color fidelity, it consists of lasers instead of LEDs. Laser technology has one drawback: it is still far from being ready for mass production.

"We'll need to do a lot of engineering to achieve a consumer viable laser that meets our specs — that's safe, low-cost, and efficient and that can fit in a slim VR headset. As of today, the jury is still out on finding a suitable laser source, but if that proves tractable, there will be a clear path to sunglasses-like VR displays", Abrash said.

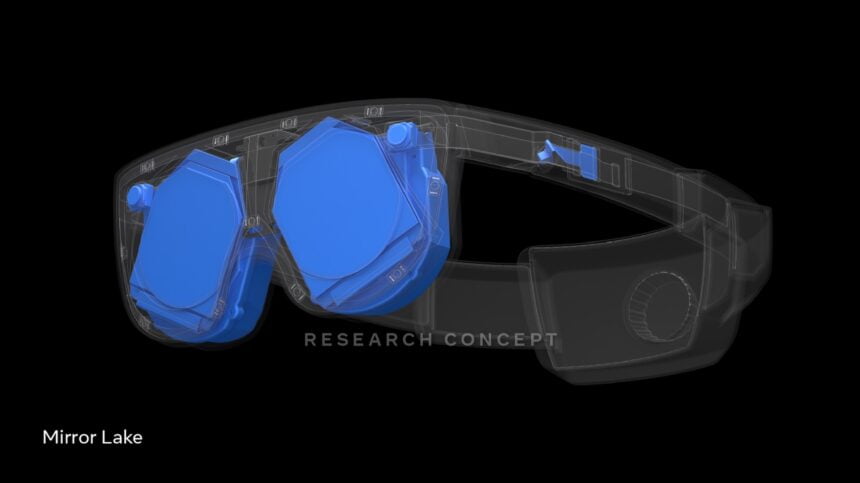

Mirror Lake is Meta’s final boss

Half-Dome, Butterscotch, Starburst and Holocake 2 demonstrate new display systems that should help pass the visual Turing test. Meta's next goal is to combine all these technologies into a lightweight, slim and low-power VR headset.

The latest prototype, called Mirror Lake, serves just that purpose. Although, at the moment, we should rather speak of a research concept: Meta has not yet managed to build a working headset of this kind, nor has it shown a prototype.

A rendering of the planned Mirror Lake prototype. | Image: Meta

The Holocake 2 prototype's holographic lens is the technical foundation, as it allows for the integration of other technologies (varifocal display, multi-view eye tracking, distortion correction, and customizable view levels) in a slim ski goggle form factor.

Mirror Lake is also said to offer new passthrough technology and external displays that show the user's eyes and face to the outside world. Meta unveiled a prototype to that effect just under a year ago.

Of all the VR prototypes, Mirror Lake is still the farthest from commercialization and could be abandoned in favor of other display technologies if they prove more promising.

Below is a 30-minute video in which Mark Zuckerberg and Michael Abrash talk in more detail about VR display research and what it means for Virtual Reality.

Note: Links to online stores in articles can be so-called affiliate links. If you buy through this link, MIXED receives a commission from the provider. For you the price does not change.