How Meta's AR Engine helps build a large-scale AR platform for everyone

Meta's AR Engine makes it easier for developers to create engaging augmented reality experiences that work on almost any device, potentially reaching millions of users worldwide.

Meta has been refining its Spark AR toolkit that aims to simplify cross-platform development. The idea is to make it easier to create an augmented reality experience that can run on a smartphone, VR/XR headset, or computer without redesigning it for each platform. This same AR engine could help pave the way for future augmented reality glasses like Meta's Project Nazare.

AR hardware exists in dramatically different devices with varied capabilities. Screens range from hand-held phones that provide a small, movable window on an augmented view; to laptop screens and computer monitors with larger views but in a fixed position; to 360-degree, immersive experiences in a VR headset.

The performance of a fast computer far exceeds that of even the best mobile devices, making it a challenge to scale tracking, graphics resolution, and special effect quality to match each device's internet bandwidth and processing power. Future devices, such as the Meta Quest 3, will be faster, but Meta needs developers working on AR solutions now to be ready when AR hardware has a breakthrough moment.

Finding creative inspiration while faced with technical challenges can be difficult. That's why Meta introduced an AR engine that could simplify this process.

Making AR experiences more creative

AR offers many opportunities for creative exploration. A popular smartphone example is tracking your face in Facebook Messenger and applying effects that follow your movement. Still, it's possible to flip to a smartphone's rear camera or use a Quest Pro's mixed reality mode to create graphics and effects that appear within your environment. Virtual avatars can walk around your room, computer-generated snow can fall indoors, and virtual balls can bounce off your wall, as we've seen in Meta's The World Beyond and other mixed reality apps for Quest.

Audio can play a role in AR, signaling direction in subtle and realistic ways to encourage you to turn around and see what's happening to the side or behind you. This opens up creative storytelling opportunities that might be difficult to convey with only visual effects alone.

Ultimately, Meta wants to make it easier to focus on creating instead of being caught up in the technical details. In particular, optimizing an experience to run well across devices can become a barrier to creativity.

Optimizing is a game of inches

Balancing these optimization decisions with the desire to create a rich experience that everyone can enjoy is a difficult task. Face, hand, and world-tracking place a burden on hardware, particularly when adding particle effects and re-projecting images and animations to fit within the room. Meta has the resources and data to help solve these problems, and that's the goal of the Spark AR engine.

Meta pointed out that optimizing AR experiences is a game of inches. That is, a significant amount of effort leads to a small improvement, but by taking several small steps toward a better solution, larger gains are possible.

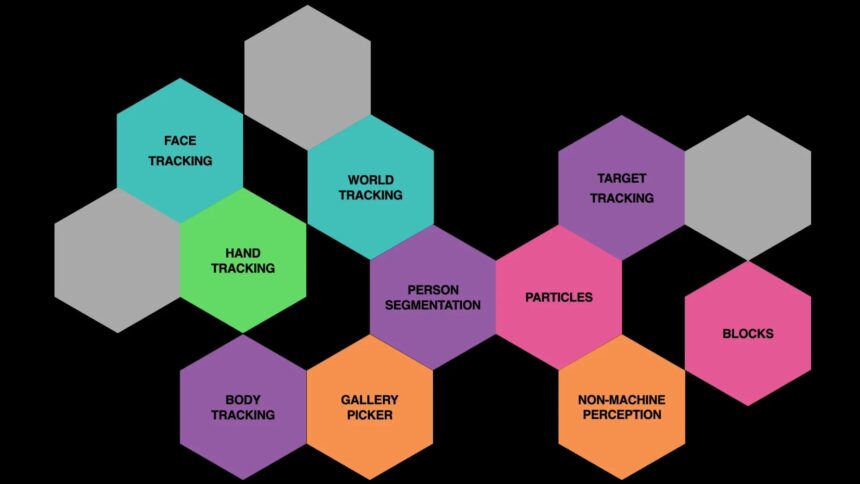

Meta's AR runtime uses optional plugins, including articulated avatars, so developers can choose from a library of features to include.

Since these plugins are already fully optimized for each platform, AR creators are able to design a richer game or experience with less hassle. Facebook, Instagram, and Whatsapp support the Spark AR engine on mobile devices, computers will load the AR library in a web browser, and the Quest platform is supported as well.

Meta's Spark AR engine is just one of many solutions available to AR developers and creators. Google and Apple have AR toolkits that run on mobile devices, and Snapchat Lenses have been around since 2015. Google recently laid out its AR plans to create more immersive experiences as well.

2/26/2023: An earlier version of this article stated that Meta introduced a new AR engine, which was wrong. It's now corrected.

Note: Links to online stores in articles can be so-called affiliate links. If you buy through this link, MIXED receives a commission from the provider. For you the price does not change.