NeuralPassthrough: Meta shows AI-based AR for VR headsets

Good VR passthrough is a major technical challenge. Reality Labs researchers demonstrate a new, AI-based solution.

Content

Headsets like Varjo XR-3, Quest Pro, Lynx R-1, and Apple's upcoming device will be the best way to experience augmented reality in the coming years.

Unlike traditional AR headsets with transparent optics like Hololens 2 and Magic Leap 2, which project AR elements directly into the eye via a waveguide display, the aforementioned headsets film the physical environment with outward-facing cameras and then display it on the opaque displays. There, they can then be expanded with AR elements as desired.

Artificial gaze synthesis: a big problem

This technology, called Passthrough AR, has great advantages over classic AR optics, but also its problems. The challenge is to reconstruct the physical environment using sensor data as if the person wearing the headset were seeing it with their own eyes. This is an enormously difficult task.

The resolution, color fidelity, depth representation, and perspective: All these must correspond to the natural visual impression and change with as little latency or time delay as possible when the head is moved.

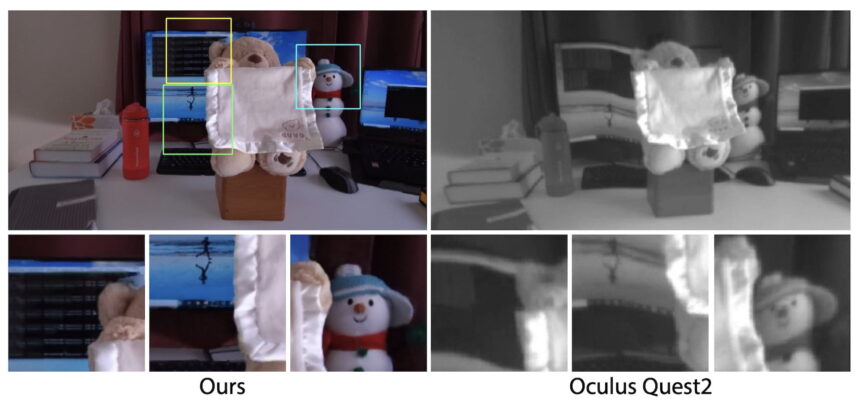

NeuralPassthrough and Passthrough+ of the Meta Quest 2 in comparison. | Image: Reality Labs Research

Perspective in particular poses great difficulties for the technology, as the positions of the cameras do not exactly match the positions of the eyes. This perspective shift can lead to discomfort and visual artifacts. The latter is because the sides of a close object or the hands may be obscured in the camera view, while this would not be the case in the natural view.

The Meta Quest 2 shows the current state of see-through technology available to the consumer: The display of the surroundings is black and white, grainy and distorted, especially for objects that are held close to the face.

The devices mentioned above will soon provide better results or already do so, since the sensor technology is optimized for passthrough technology. However, you should not expect a perfect image of the physical environment, since many of the basic problems (see above) have not yet been solved.

AI reconstruction yields high-quality results

In August, Meta researchers unveiled several technical innovations for VR at Siggraphh 2022, including a new passthrough method that reconstructs visual perspective using artificial neural networks. The technique is called NeuralPasstrough.

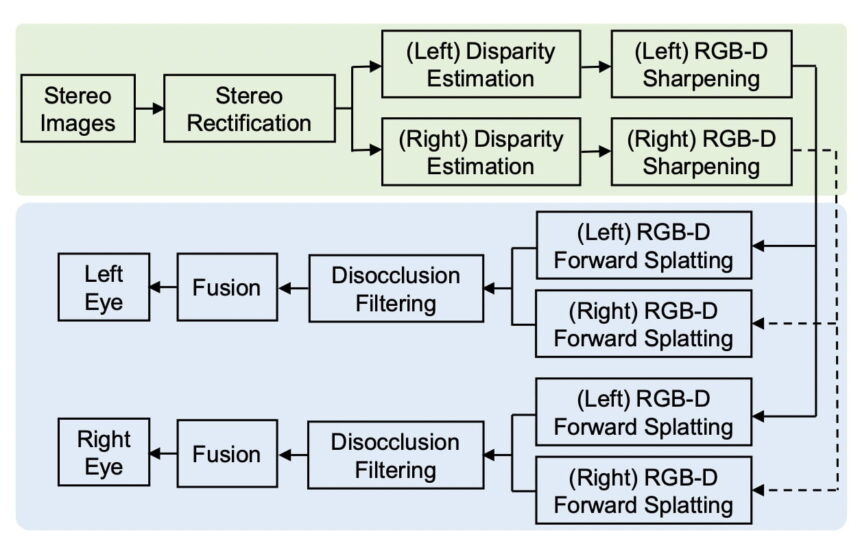

"We introduce NeuralPassthrough to leverage recent advances in deep learning, solving passthrough as an image-based neural rendering problem. Specifically, we jointly apply learned stereo depth estimation and image reconstruction networks to produce the eye-viewpoint images via an end-to-end approach, one that is tailored for today’s desktop-connected VR headsets and their strict real-time requirements," the research paper states.

Passthrough is not all the same. The stereo footage from the cameras goes through a variety of AI-driven adjustments before being output to the displays | Image: Xiao et al. / Reality Labs Research

The developed AI algorithm estimates the depth of the room and the objects in it and reconstructs an artificial angle of view that corresponds to that of the eyes. The model was trained with synthetic data sets: Image sequences showing 80 spatial scenes from different viewing angles. The resulting artificial neural network is flexible and can be applied to different cameras and eye distances.

Perfect Passthrough AR still has a ” truly long road” ahead of it

The results are promising. Compared to Meta Quest 2 and other passthrough methods, NeuralPassthrough delivers better image quality and meets the requirements for perspective-correct stereoscopic gaze synthesis, as the following video shows.

Nevertheless, the technique has certain limitations. For example, the quality of the result depends heavily on how accurate the estimation of the AI space works. Depth sensors could improve the result in the future. Another challenge is perspective-dependent reflections on objects that the AI model cannot reconstruct. This in turn leads to artifacts.

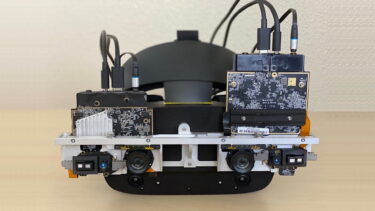

Another problem is computing power: The prototype built especially for research purposes (see cover picture) is powered by a desktop computer, in which an Intel Xeon W-2155 and two Nvidia Titan V operate - one high-end graphics card per eye.

The result is a passthrough image with a resolution of 1,280 x 720 pixels and a latency of 32 milliseconds. This is too low a resolution with too high a latency for a high-quality passthrough.

"To deliver compelling VR passthrough, the field will need to make significant strides both in image quality (i.e., suppressing notable warping and disocclusion artifacts), while meeting the strict real-time, stereoscopic, and wide-field-of-view requirements. Tacking on the further constraint of mobile processors for wearable computing devices means that there truly is a long road ahead," the scientists write.

Find all our AI news on THE DECODER.

Note: Links to online stores in articles can be so-called affiliate links. If you buy through this link, MIXED receives a commission from the provider. For you the price does not change.