VividQ promises breakthrough for spatial AR optics

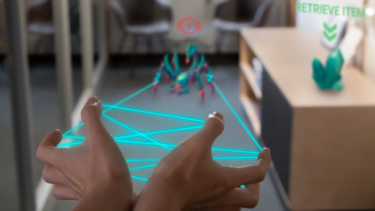

The waveguide optics of VividQ and Dispelix display objects with variable depth of field. AR gaming could benefit from a wide field of view.

Holographic display developer VividQ and waveguide manufacturer Dispelix report an advance that could improve gaming with transparent AR headsets. A proprietary solution is said to provide a clear image with variable depth of field. Advantages include a wide field of view and a large eyebox for people with different interpupillary distances (IPD).

A critical requirement for a good AR game, according to VividQ, is the ability to accurately render different depths of field. If an insect is crouching at the end of a real corridor, the view should adjust to that distance. If it jumps onto your hand, the focus should shift to a close-up.

Natural focus for AR headsets

According to VividQ, waveguide displays have not been able to reproduce this effect properly. However, the company's proprietary "3D Waveguide Combiner" and software is claimed to be the breakthrough. The AR lenses, also known as "combiners" in the industry, combine natural and digital light to create believable augmented reality.

Computer graphics objects should blend more naturally into the real world with proper focus. | Image: VividQ

In the Magic Leap or Microsoft Hololens AR headsets, light from a small projector travels in parallel through a 2D waveguide until it reaches the eye. But the result is a 2D image. Even when a virtual insect sits directly on a real hand, the focus remains in the distance.

"Objects cannot be interacted with naturally at arm’s length, and they are not placed exactly within the real world," VividQ explains. The result not only looks unnatural, but also triggers the Vergence-Accommodation-Conflict or VAC for short. This can sometimes cause headaches or eye pain because the eye cannot focus naturally.

Previous 3D waveguides have failed because of distortion. A spatial image requires the use of diverging beams. Because they are reflected back and forth several times as they travel through the narrow waveguide, their paths diverge sharply. The result is a blurry image with many overlapping copies of the same image.

This is where VividQ's solution comes in. The company has an algorithm for correcting the waveguide's faulty image: The software calculates a hologram that corrects the distortions. In addition, the waveguide itself is slightly modified to work in harmony with the algorithm.

3D waveguide for AR apps

That's why manufacturers only license their system of hardware and software together to AR glasses makers. Without the proprietary algorithm, the solution cannot be used, according to VividQ. "We’ve solved that problem, designed something that can be manufactured, tested and proven it, and established the manufacturing partnership necessary to mass produce them," said VividQ CEO Darran Milne.

In an industry plagued by hype, it's easy to dismiss inventions as old ideas in new packaging, said Milne. "But a fundamental problem has always been the complexity of displaying 3D images placed in the real world with a decent field of view and with an eyebox large enough to accommodate a wide range of IPDs."

The announcement does not mention exact values for a possible field of view. However, for augmented reality to reach the mass market, a "sufficient" field of view is important - and a natural focus on distances from ten centimeters to infinity.

Founded in 2017 in Cambridge, England, VividQ first developed a mixed-reality headset and later worked with Arm on holographic smartphone displays for AR headsets. In 2021, the startup raised $15 million to develop technology that turns traditional LC screens into holo-displays with 3D depth.

Dispelix founder and CEO Antti Sunnari sees the potential of the new 3D waveguide combiner primarily in AR gaming and professional use. To be able to comfortably stay in immersive environments for long periods of time, he says, it is essential to have real spatial content placed in the user's environment.

Different ways to view augmented reality

Swiss company Creal is taking a different approach to AR lenses. The startup is betting on a different type of combiner called HOEs, or holographic optical elements. HOEs for AR headsets are completely transparent to almost all real light, but reflect almost all light projected from a display, according to a September 2022 white paper.

The first working lens prototype appeared in the summer of 2021, delivering high-quality 3D images and supporting Creal's light field technology, which can simulate different depth planes for AR elements. Sony, Intel, and North have also developed and deployed HOEs for AR devices.

Note: Links to online stores in articles can be so-called affiliate links. If you buy through this link, MIXED receives a commission from the provider. For you the price does not change.