Photorealistic Avatars: Meta's research makes progress

Meeting people virtually as if they were in the same room: that's Meta's big goal. The Codec Avatars are intended to fulfill this promise one day.

In spring 2019, Meta presented research on sophisticated avatars for the first time, which are to replace video conferences one day.

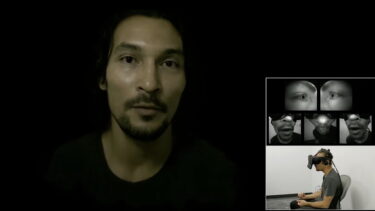

Users wear a prototype VR headset that records eye and mouth movement using five sensors. With the help of an AI model, an impressively realistic avatar is generated in real time from this data, including eye movements and the finest facial expressions.

Beforehand, the users have to be scanned in a sophisticated 3D studio. The research team hopes that in the future it will be possible to create a high-quality 3D scan of the face oneself, for example with a smartphone.

Currently, Meta relies on comic-style avatars, a concession to the severely limited processing power of the Meta Quest 2.

Codec avatars 2.0 run on Quest 2

In recent years, Meta has gradually improved the codec avatars by optimizing the AI algorithms and giving the avatars more realistic eyes.

During an MIT workshop, team leader Yaser Sheikh presented the current state of the research and showed a short video to demonstrate the latest version of the photorealistic avatars, "Codec Avatars 2.0."

In a scientific paper from last year, researchers describe recent AI advances. According to the paper, the artificial neural network is capable of calculating only the visible pixels of an avatar. This makes it possible to render up to five codec avatars on a Quest 2 at 50 frames per second.

According to Sheikh, it is impossible to predict when the Avatar technology will be ready for production. At the workshop, Sheikh said that when the project began, it was "ten miracles" away from commercialization. Today, Sheikh said, it is "only" five miracles away.

Special chip speeds up codec avatars

RoadtoVR also reported on codec avatars, summarizing a recent paper from April 2022.

According to the paper, a group of researchers has developed a special AI chip that speeds up the calculation of codec avatars on standalone VR headsets. The chip, which is only 1.6 square millimeters in size, was optimized for the avatar system and specializes in processing sensor data. Conversely, the AI model was also revised for the chip's architecture.

The result of these optimizations: The encoding of the codec avatars runs faster and the energy consumption as well as the heat development are lower. The XR2 chip is responsible for the decoding and the actual rendering of the avatars.

Zuckerberg previously said in a podcast with Lex Fridman that Meta will prioritize realistic social interaction in VR, even if the technology required to do so leads to more expensive, clunkier headsets.

Note: Links to online stores in articles can be so-called affiliate links. If you buy through this link, MIXED receives a commission from the provider. For you the price does not change.