This is how VR hands should look like: Developer shows realistic interactions

A developer worked for two years on a toolkit for hand interactions. The result is impressive.

Hand interactions are the be-all and end-all of believable virtual reality. What looks so simple in real life is a highly complex matter in VR: The virtual hands have to adapt to each object and simulate how and where to touch, grasp and pick up virtual objects.

Programming such a system is a Herculean task in itself. That's why Unity developer Earnest Robot set out to create a ready-to-use toolkit for developers. On Reddit and Twitter, he shows off the fruits of his two-year effort.

Auto Hand: User-friendly and customizable

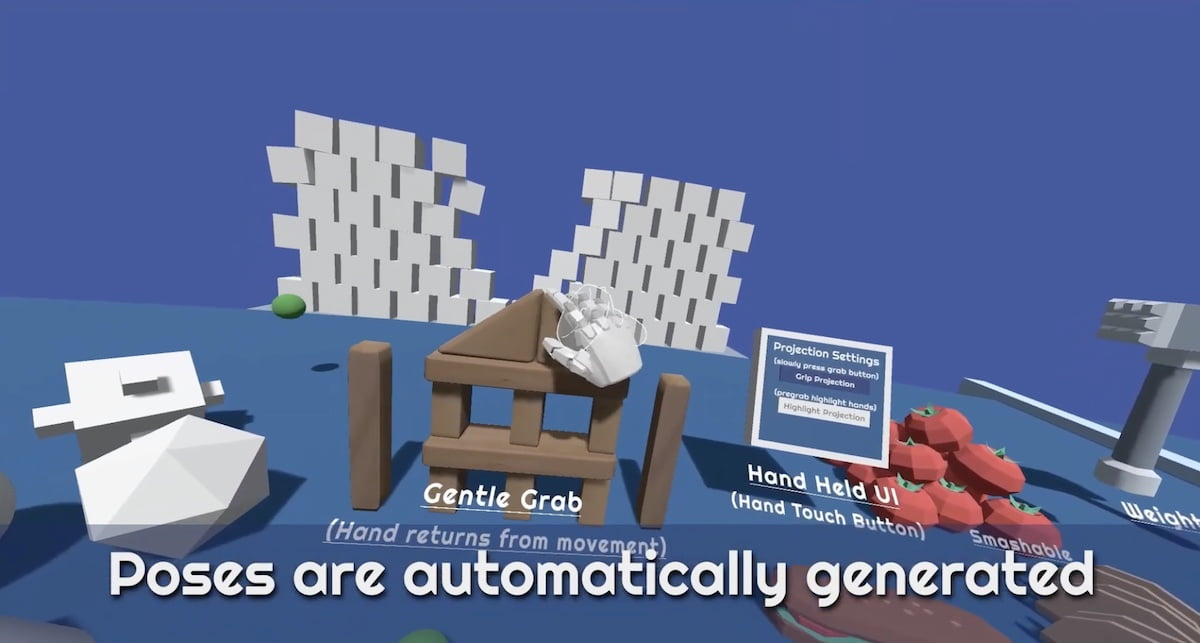

Auto Hand is the name of the Unity toolkit that automatically generates hand poses depending on how and with which objects you interact. The interaction system simulates weight and resistance, two-handed grasping, pulling apart, crushing and throwing objects, interactions with levers, sliders, doors, controls and buttons. Locomotion via gestures is also part of the kit.

The developer shows hand interactions with VR controllers, but also includes a hand tracking demo for Quest devices. Developers can adapt the interaction system to their needs. Earnest Robot promises high and user-friendly adaptability with low power requirements.

Advances in hand interactions

Some studios successfully implemented believable hand interactions on this level before. In particular, the VR games Lone Echo (2017) and Half-Life: Alyx (2020) set standards but were developed by large teams. The latter VR game inspired him while working on the toolkit, the developer writes on Reddit.

In the field of hand tracking, Swiss developer Dennys Kuhnert made a name for himself. His VR app Hand Physics Lab is the current benchmark and also works with VR controllers. With his start-up Holonautic, Kuhnert gives hand tracking courses for players in the XR industry.

Because developing manual interactions is time-consuming, Meta released a similar toolkit in early February, called the Interaction SDK, that animates hands believably, with or without VR controllers.

Earnest Robot's solution is not rendered obsolete by this: For one thing, developers can combine interactions from both toolkits as needed, and for another, Earnest's software is not tied to Meta's ecosystem.

Auto Hand can be found in the Unity Asset Store. There is also a link to a demo. An App Lab release for Meta Quest (2) is on the developer's to-do list.

Read more about VR Technology:

- Cambria: Does Meta's high-end VR headset offer facial haptics?

- Playstation VR 2: Sony apparently seeks help with eye tracking

- Virtual Reality: This haptic shirt pushes the pain threshold

Note: Links to online stores in articles can be so-called affiliate links. If you buy through this link, MIXED receives a commission from the provider. For you the price does not change.