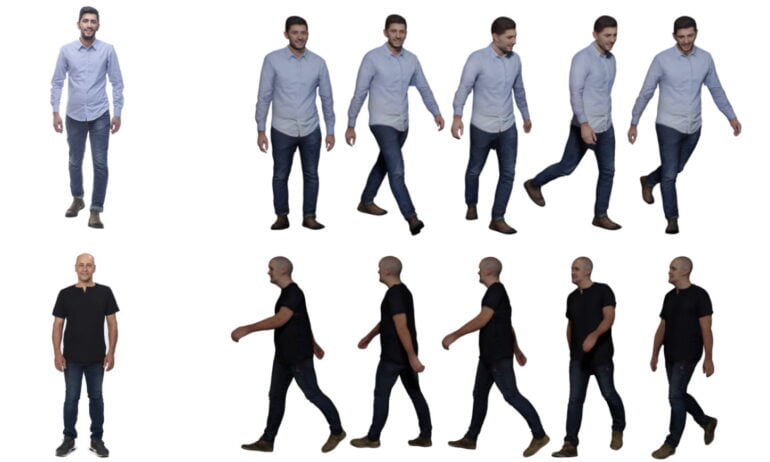

Google's PHORUM AI shows how impressive 3D avatars can be created just from a single photo.

Readily available high-quality 3D scans of persons have numerous applications, such as in image processing, online retail for virtual fittings, telepresence, and of course as digital avatars in the Metaverse for AR and VR.

Until now, however, such models have relied on complex automatic scanning by a multi-camera system, manual creation by artists, or a combination of both. Even the best camera systems still produce artifacts that must be cleaned up manually.

Artificial Intelligence should simplify this process and enable high-quality 3D avatars from a few or even just a single photo. To achieve this, the corresponding models need to reconstruct 3D geometry and numerous surface properties, such as color, reflectivity, shading, or normal vectors.

Google's PHORUM outperforms alternative AI models

Numerous projects attempt this task, but do not provide all relevant surface properties and often still rely on individual modules in the process that are not learned.

Google researchers are now demonstrating PHORUM, a system for reconstructing 3D avatars from a single photograph. PHORUM is an end-to-end trainable AI system and computes numerous properties such as albedo (brightness of a body) and shading information that alternative systems have ignored in the past.

PHORUM was trained using a mixture of computed images against an HDR image background and associated meshes. In total, the team used 217 scans of people in various poses, outfits, and occasionally holding handbags or other objects. With additional modifications, such as different colors for clothing, the dataset includes nearly 190,000 images.

PHORUM produces more realistic results than alternative methods such as PIFu and adds clothing details that are not visible, such as the back of a pair of pants. Due to the numerous co-calculated surface properties, the 3D avatars can also be inserted into new digital environments. For example, the lighting of the new image can be transferred onto the 3D avatar which can then be inserted into a group photo.

Systems like PHORUM need more data

The 3D avatars reconstructed by PHORUM can also be animated afterwards - the AI system would thus also have the potential to simplify work with 3D scans for CGI and video games.

PHORUM still has limitations in reconstructing loose, too-large and non-Western clothing, the researchers said. In some cases, the back and front of a digital person do not match. For example, a pair of pants has a different fabric in the front than in the back. These problems could be addressed with more geographically and culturally diverse data sets, the researchers say.

Furthermore, the resolution of the generated 3D avatars is quite low - for example, the training images have a resolution of 512 by 512 pixels and the results are at a similar resolution. Thus, a practical application of PHORUM in the industry is currently not an option.

But the technology could likely achieve better image quality in the future, e.g., with AI upscalers, better training data, and other architectures. A similar development can be seen with the use of GANs or diffusion models as in DALL-E 2.

More details about PHORUM and more examples can be found on the Github project page.